Over a decade ago, Tatham Oddie pushed the first commit, followed closely by my commit of the starting code, to what would become httpstat.us. Fun fact - this was originally a Ruby app with a database, but it was rewritten the following month in C# on ASP.NET MVC3.

Years went by, I kept renewing the domain and the Azure resourced paid, the codebase was upgraded to the latest .NET Framework runtimes, but it kind of just… existed.

Eventually Mickaël Derriey ported it to .NET Core but because of reasons, this never got deployed, and it just kind of sat there.

Well, at the end of 2021 I had some time on my hands, so I decided it was time to finish what’d been started, port to .NET 6 and run it as a containerised app.

Porting to .NET 6 was really straight forward a process, just change the Target Framework Moniker to net6.0 and update the GitHub Actions, and we were done. The real fun can to deploying it to Azure as a container.

My goal was to deploy it as a custom App Service container and then make that container available for anyone who wants to self-host the site.

Site goes boom

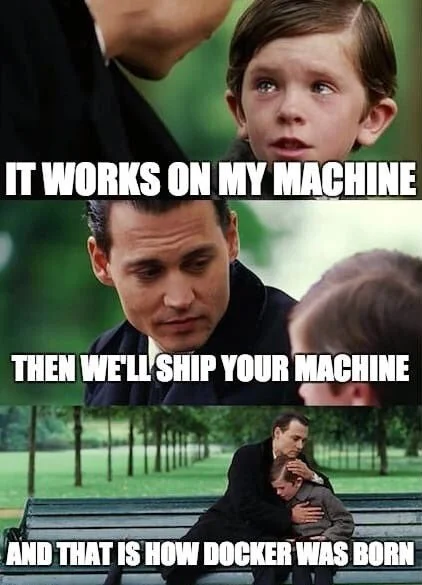

With the Dockerfile created, a new GitHub Actions pipeline to build and publish container images, and a step to update the App Service to use them, I assumed everything would be all good. After all, I was running it locally in Docker, so it should work in production using Docker right?

No, that’s not what happened, the App Service would time out on start up. After countless deployments, pulling the image locally to test it (it worked locally) and increasingly deep dives into the App Service logging components I was out of ideas as to why it wouldn’t start… Until I got a breakthrough.

How App Service validates container startup

I decided to sandbox the problem in a brand new .NET 6 app, and add in the code for httpstat.us piece by piece to find the offending line of code. I got to the point where I would add the logic for handling status codes, but as soon as it was added I’d get the following logs (this is from the sample I was rebuilding):

2021-12-15T23:39:06.601Z INFO - Starting container for site

2021-12-15T23:39:06.606Z INFO - docker run -d -p 80:80 --name aapowell-dotnet6-mvc_0_5778b9ac -e WEBSITES_ENABLE_APP_SERVICE_STORAGE=false -e DOCKER_CUSTOM_IMAGE_NAME=registry20211215115650.azurecr.io/webapplication1:20211215231822 -e WEBSITE_SITE_NAME=aapowell-dotnet6-mvc -e WEBSITE_AUTH_ENABLED=False -e PORT=80 -e WEBSITE_ROLE_INSTANCE_ID=0 -e WEBSITE_HOSTNAME=aapowell-dotnet6-mvc.azurewebsites.net -e WEBSITE_INSTANCE_ID=80fa370e688dca2b88312acd83eb0059bdb22388056f36ce8b5a46f963d6eec6 registry20211215115650.azurecr.io/webapplication1:20211215231822

2021-12-15T23:39:06.609Z INFO - Logging is not enabled for this container.

Please use https://aka.ms/linux-diagnostics to enable logging to see container logs here.

2021-12-15T23:39:12.965Z INFO - Initiating warmup request to container aapowell-dotnet6-mvc_0_5778b9ac for site aapowell-dotnet6-mvc

2021-12-15T23:39:46.036Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 33.0710629 sec

2021-12-15T23:40:01.435Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 48.4703022 sec

2021-12-15T23:40:16.762Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 63.7977782 sec

2021-12-15T23:40:33.149Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 80.1846337 sec

2021-12-15T23:40:48.385Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 95.4199035 sec

2021-12-15T23:41:09.251Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 116.2859279 sec

2021-12-15T23:41:24.489Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 131.5245851 sec

2021-12-15T23:41:48.387Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 155.4220304 sec

2021-12-15T23:42:03.615Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 170.6498268 sec

2021-12-15T23:42:18.827Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 185.8618694 sec

2021-12-15T23:42:34.047Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 201.0824415 sec

2021-12-15T23:42:49.304Z INFO - Waiting for response to warmup request for container aapowell-dotnet6-mvc_0_5778b9ac. Elapsed time = 216.338939 sec

2021-12-15T23:43:03.556Z ERROR - Container aapowell-dotnet6-mvc_0_5778b9ac for site aapowell-dotnet6-mvc did not start within expected time limit. Elapsed time = 230.5910478 sec

2021-12-15T23:43:03.558Z ERROR - Container aapowell-dotnet6-mvc_0_5778b9ac didn't respond to HTTP pings on port: 80, failing site start. See container logs for debugging.

2021-12-15T23:43:03.566Z INFO - Stopping site aapowell-dotnet6-mvc because it failed during startup.

The container starts, but then it times out during the warmup request as that never responds, thus the container is killed after 230 seconds, which is the default (yes, I tried increasing the startup timeout, all that did was make it take longer before I knew it failed).

With the help of a bunch of Console.WriteLine statements, I was able to watch the startup process of the App Service, and I noticed a request coming to something I didn’t recognise, something like /robots933456.txt, which doesn’t exist in the container. Weird, nothing I’m doing makes that request, so what is it.

Some web searching later and I landed here which is the App Service docs explaining that file. It turns out that when App Service starts the container, it probes for this file and waits for a 404 response. So, why did it break for me?

Well, because there’s a bug in httpstat.us’s route handling. First, some background to the way the site works. Any request that comes in that could be a status code is either matched to known ones, or parsed as an unknown and returned. This means that you could request a status code of 999 if you wanted, and we’ll send you back that as a status code. This is really just to help people who are using non-standard web server configurations, rather than just being strictly standards compliant.

But as I said, there was a bug. The routing was looking for anything that comes in after the / that we didn’t have a predefined route for (of which we don’t really have many), not just things that are numerical, so the request for /robots933456.txt was being treated as a status code and returned, but since it’s not a valid number, the status code was 0, so App Service was receiving 0 as the status code response rather than the 404 it was expecting, so it’d keep waiting until eventually it timed out.

I added in an int route constraint, deployed and we were finally working again! 🎉

Other changes

For a little over a month now the site has been running .NET 6 in a container and it seems to be going just fine. I need to learn how to use App Insights properly to understand the performance metrics, but in the last hour (as of time of writing) almost 400k requests were served, which seems pretty decent.

If you want to self-host it, you can run the same Docker image that is run in production, it’s available via GitHub packages.

The other notable changes are:

- Response

Transport-Encodingischunked, which is the default for Kestrel - There’s no more

X-Served-Byheader, that came from IIS and we’re not using that anymore- I’d recommend you use the

Serverheader, which is standard

- I’d recommend you use the

- It’s HTTPS only, as it should be

- I haven’t tested CORS, but it should work

- If it doesn’t open an issue

There’s probably other things that have changed, but that’s the stuff that I’m at least aware of.

Next steps

Now that the major rework has been done and the codebase modernised, my next step is to tackle the fair use policy which I’d outlined at the start of 2021.

I still don’t have a timeline for completing that work, as I need to look at how to best roll it out and not impact current users significantly. In the mean time, chime in on the GitHub issue if you’ve got any questions or feedback on the policy.